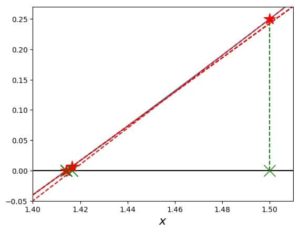

Which leads me to suspect that other derivative based methods (such as Newton-Raphson) will have similar problems. If I adjust the step length for the FDM i get slightly better results but I can't get it small enough before it stops working. Newton Raphson Method is an open method of root finding which means that it needs a single initial guess to reach the solution instead of narrowing down two initial guesses. And I believe the inaccuracy comes from the calculation of the finite difference approximation to the gradient used in the BFGS. In numerical analysis, Newtons method, also known as the NewtonRaphson method, named after Isaac Newton and Joseph Raphson, is a root-finding algorithm which produces successively better approximations to the roots (or zeroes) of a real-valued function. I'm pretty sure I'm not hitting machine precision problems. stable algorithm that is less likely to wander off far from a maximum.

For my application this is not good enough. While Sage is a free software, it is affordable to many people, including the teacher and the student as well. Sage has a large set of modern tools, including groupware and web availability. Visual analysis of these problems are done by the Sage computer algebra system. This works well to some degree but in some cases it fails to provide an accurate result beyond 7 digits or so. The multivariate Newton-Raphson method also raises the above questions. Where $ f : \mathbb$ and then minimize it using BFGS. Without this modification, however, quadratic convergence can only be ensured when the initial guess belongs to a quadratic convergence region, namely a region from which every starting point generates a quadratically convergent Newton sequence.In a practical problem I need to find the solution to: This involves a modification of Newton’s search direction at each step of the method. An example of a globally convergent variant is the so-called Levenberg–Marquardt method. To ensure global convergence (i.e., to ensure convergence to a solution from any initial point), suitable modifications of the Newton method are needed. Hence, if the user has an idea of where a solution might be lying, Newton’s method is well known to be the fastest and most effective method for solving ( 1). When started at an initial guess close to a solution, Newton’s method is well defined and converges quadratically to a solution of ( 1), unless the Jacobian of f is singular or the second partial derivatives of f are not bounded. The interested reader will find an excellent survey of Newton’s method in. Taylor expansions for multi-variable functions We follow the ideas of Newtons method for one equation in one variable: approximate the nonlinear f by a linear function and find the root of that function.

INTRODUCTION Finding the solution to the set of nonlinear equation f2.fn)0F(x) (f1, is been a problem for the past years. For systems of equations the Newton-Raphson method is widely used, especially for the equations arising from solution of differential equations. Basic results on Newton’s method and comprehensive lists of references can be found, e.g., in the books by Dennis and Schnabel, Ostrowski, Ortega and Rheinboldt, Deuflhard and Corless and Fillion. And paper also discuss about single variable and multi variable Newton-Raphson techniques.

As a result, studies of Newton’s method form an extremely active area of research, with new variants being constantly developed and tested. Problem ( 1) arises in practically every pure and applied discipline, including mathematical programming, engineering, physics, health sciences and economics.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed